Archives for learning rate

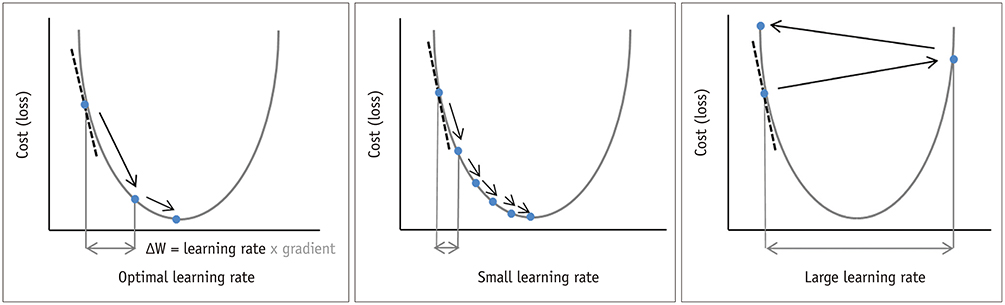

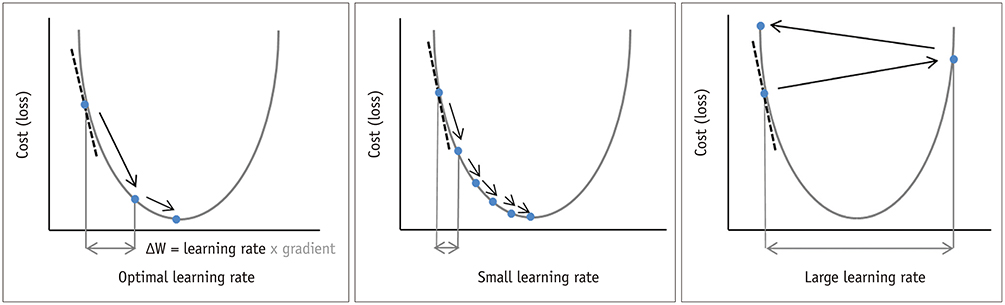

In a neural network, there is the concept of loss, which is used to calculate performance. The higher the loss, the poorer the performance of the neural network, that is why we always try to minimize the loss so that the neural network performs better.

Learning rate is an important parameter in neural networks for that we often spend much time tuning it and we even don’t get the optimum result even trying for some different rates.

This post explains most underlined

Plateau phenomenon and it's remedies

which is related to optimization of ML model.

A key balancing act in machine learning is choosing an appropriate level of model complexity: if the model is too complex, it will fit the data used to construct the model very well but generalise poorly to unseen data (overfitting); if the complexity is too low the model won’t capture all the information in the…

The post Why Learning Rate Is Crucial In Deep Learning appeared first on Analytics India Magazine.