Archives for Ensemble Learning

Gradient Boosting Decision Tree (GBDT) is a popular machine learning algorithm. It has quite effective implementations such as XGBoost as many optimization techniques are adopted from this algorithm. However, the efficiency and scalability are still unsatisfactory when there are more features in the data.

The post Complete Guide To LightGBM Boosting Algorithm in Python appeared first on Analytics India Magazine.

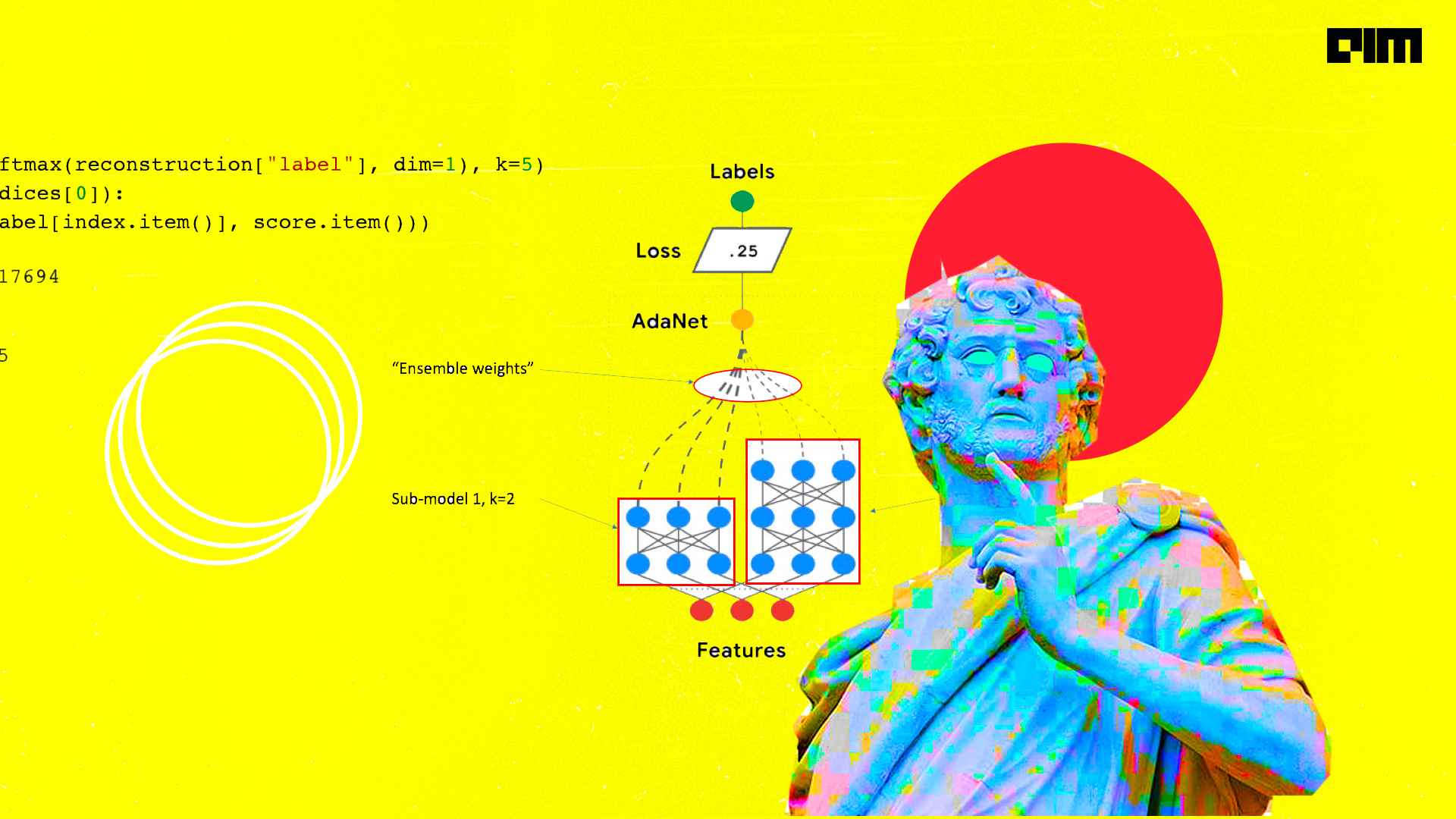

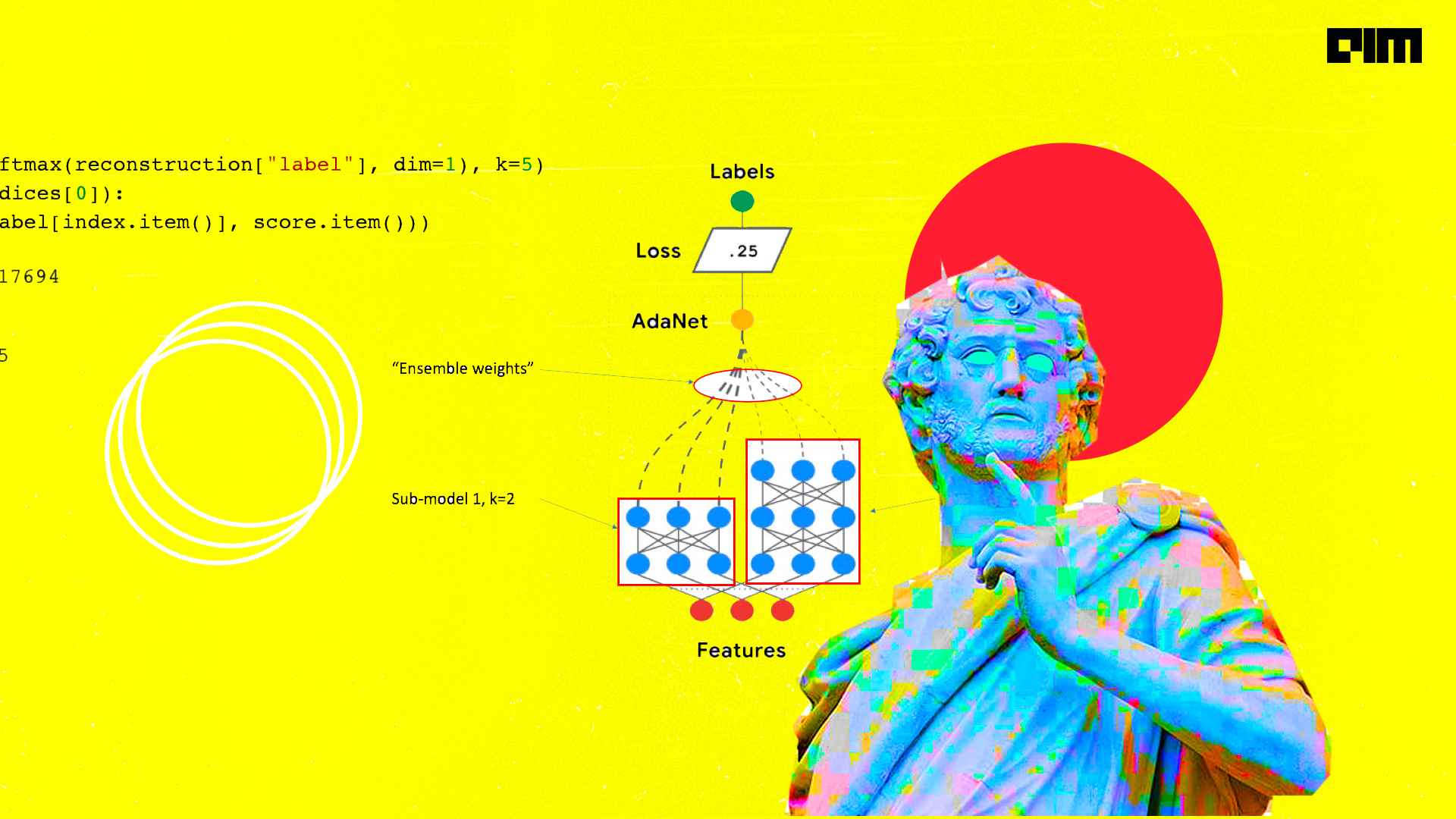

Ensemble Learning is the process of gathering more than one machine learning model in a mathematical way to obtain better performance.

The post Comprehensive Guide To Ensemble Methods For Data Scientists appeared first on Analytics India Magazine.

In recent years, ensemble learning or boosting has become one of the most promising approaches for analysing data in machine learning techniques. The method was initially proposed as ensemble methods based on the principle of generating multiple predictions and average voting among individual classifiers. Researchers from the Institute for Medical Biometry, Germany, have identified the…

The post AdaBoost Vs Gradient Boosting: A Comparison Of Leading Boosting Algorithms appeared first on Analytics India Magazine.

In recent years, ensemble learning or boosting has become one of the most promising approaches for analysing data in machine learning techniques. The method was initially proposed as ensemble methods based on the principle of generating multiple predictions and average voting among individual classifiers. Researchers from the Institute for Medical Biometry, Germany, have identified the…

The post AdaBoost Vs Gradient Boosting: A Comparison Of Leading Boosting Algorithms appeared first on Analytics India Magazine.

In this article, I’ll be discussing how XGBoost works internally to make decision trees and deduce predictions.

The post Understanding XGBoost Algorithm In Detail appeared first on Analytics India Magazine.

In recent times, ensemble techniques have become popular among data scientists and enthusiasts. Until now Random Forest and Gradient Boosting algorithms were winning the data science competitions and hackathons, over the period of the last few years XGBoost has been performing better than other algorithms on problems involving structured data. Apart from its performance, XGBoost is also recognized for its speed, accuracy and scale. XGBoost is developed on the framework of Gradient Boosting.

The post Complete Guide To XGBoost With Implementation In R appeared first on Analytics India Magazine.

In this article, we will create an ensemble of convolutional neural networks. In this experiment, we will create an ensemble of 10 CNN models and this ensemble will be applied in multi-class prediction of MNIST handwritten digit data set.

The post Hands-on Guide To Create Ensemble Of Convolutional Neural Networks appeared first on Analytics India Magazine.

In this article, we will show a heterogeneous collection of weak learners to build a hybrid ensemble learning model. Different types of machine learning algorithms are grouped together in this task to work on a classification problem. We will show the performance of individual weak learning models and then the performance of our hybrid ensemble model.

The post A Hands-on Guide To Hybrid Ensemble Learning Models, With Python Code appeared first on Analytics India Magazine.